Any software project eventually stumbles over this irking question. Is automated testing worth investing money and effort, given that development itself drains so many resources and time? If you haven’t yet come across this consideration point, then you’re blindly stepping towards a dangerous pitfall.

What’s automated testing?

In a nutshell, there are two major categories that split all software testing activities:

- Manual testing

- Automated testing

In most cases, these two approaches to verify your software should be practised simultaneously. The division line is seemingly simple: all repetitive test cases can be automated. It takes writing test cases and crafting a script which will always be there to test the same or similar tasks again.

Manual testing, in its turn, should be applied if you need to check some specific function and a separate script couldn’t be reused again or its mere development will be so complicated that it looks financially unacceptable.

Why should I care about automated testing?

As the matter of fact, many small businesses and startups stick to manual and ad hoc testing due to financial and timing reasons. If you have an MVP which is solely created to acquire feedback and validate a business idea, manual testing will define your speed to market. There are also some other reasons why you could test software ad hoc. You may explore them deeper in the explainer article by DDI Development.

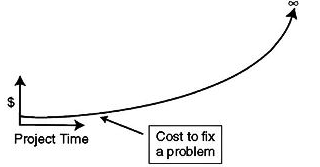

However, any sustainable software product undergoes constant improvements and changes along its life cycle unless it’s dead. As your platform thrives and gains new functional modules the costs to fix the same problem grow exponentially.

Why do they? Well, every time your engineering team integrates a new module or feature, there’s only a question: “Has anything broken after the update?”. It’s the point when you can either test old features manually over and over again or let a script take care of possible regression issues. So you may invest a lot in assembling this script on initial stages but eventually, reduce costs as the product evolves. And some testing tasks may be so complex to achieve manually that the automated approach is the only option.

How can I decide the tasks that should be automated?

Although these tasks seem obvious to be pointed out, the problems relate to complexities and layers to which automation should be applied. In order to address these challenges, the QA team at DDI Development – for instance – negotiates each task personally with stakeholders and outlines expenditures that each test case will require.

Layers of automation:

- GUI : User interfaces or a presentation layer is where a user encounters a web-service.

- API : Programming level logics.

- Database : The processes related to data integrity, deletions, and insertions.

Areas of automation:

- Regression testing : Every time code gets changed and upgraded there is a chance that some features will be broken by updates.

- Smoke testing : Unlike deep regression testing, smoke testing is applied to make sure that core and most basic functions are still alive after upgrades.

- Load and performance testing : Automation will give a clear vision on how a product behaves under high loads.

The baseline for the decision is well-articulated segregation between manual and automation test cases.

Are there any means to streamline automated testing?

Currently, the market provides hundreds of tools to make automation testing faster and tailor it to your product.

Software testing frameworks

Our QA team often uses Selenium. Selenium IDE – for instance – allows recording testing actions to let the system then execute recorded tests by itself. We personally write scripts for which Selenium provides a handful of languages to choose, including Java, Perl, PHP, Ruby, and Python. We go with Java for testing purposes as it has a wide QA community, although the web development team specializes in Python/Django and PHP/Symfony technologies. Yet the language for testing is a matter of personal choice and technical expertise.

Continuous integration systems

Besides a broad spectrum of development tasks that CI systems solve, they bring testing automation to its high point. If you have a testing script, CIs can run it repeatedly on schedule or every time new code is committed. If a test fails, CI will alarm a team and generate a task to fix the problem. Our personal choices for CIs are Bamboo and Jenkins.

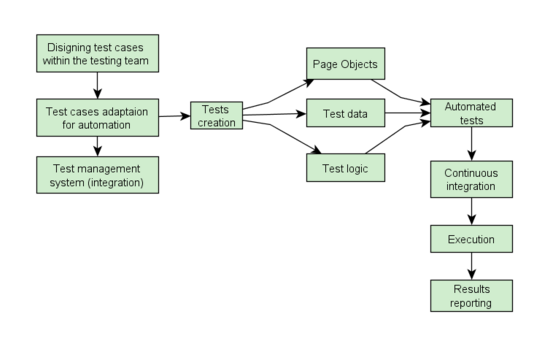

Thus, the entire testing scenario may look like this:

Why is that so important to practise both?

Any script is a narrow task mechanism which can’t cover all details, and particularly, how flowing through an interface looks and feels. Basically, manual testing helps to understand the real issues that users encounter. And some testing techniques like exploratory (ad hoc) testing involve intricate human thinking to challenge software in sophisticated ways with constantly changing variables. For this very reason, automated testing should always be organically combined with manual QA activities.